Editor’s Note : In this article, guest contributor Paul Currion looks at the potential for crowdsourcing data during large-scale humanitarian emergencies, as part of our "Deconstructing Mobile" series. Paul is an aid worker who has been working on the use of ICTs in large-scale emergencies for the last 10 years. He asks whether crowdsourcing adds significant value to responding to humanitarian emergencies, arguing that merely increasing the quantity of information in the wake of a large-scale emergency may be counterproductive. Instead, the humanitarian community needs clearly defined information that can help in making critical decisions in mounting their programmes in order to save lives and restore livelihoods. By taking a close look at the data collected via Ushahidi in the wake of the Haiti earthquake, he concludes that crowdsourced data from affected communities may not be useful for supporting the response to a large-scale disaster.

: In this article, guest contributor Paul Currion looks at the potential for crowdsourcing data during large-scale humanitarian emergencies, as part of our "Deconstructing Mobile" series. Paul is an aid worker who has been working on the use of ICTs in large-scale emergencies for the last 10 years. He asks whether crowdsourcing adds significant value to responding to humanitarian emergencies, arguing that merely increasing the quantity of information in the wake of a large-scale emergency may be counterproductive. Instead, the humanitarian community needs clearly defined information that can help in making critical decisions in mounting their programmes in order to save lives and restore livelihoods. By taking a close look at the data collected via Ushahidi in the wake of the Haiti earthquake, he concludes that crowdsourced data from affected communities may not be useful for supporting the response to a large-scale disaster.

1. The Rise of Crowdsourcing in Emergencies

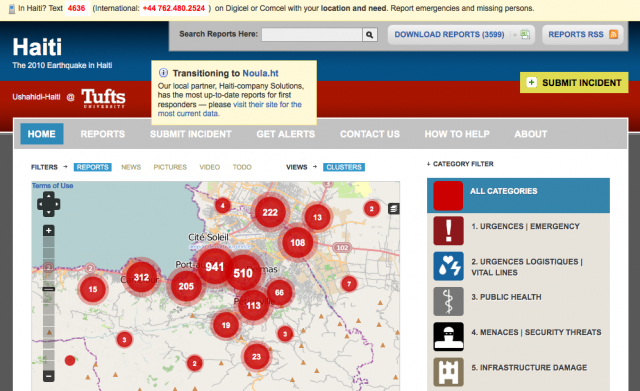

Ushahidi, the software platform for mapping incidents submitted by the crowd via SMS, email, Twitter or the web, has generated so many column inches of news coverage that the average person could be mistaken for thinking that it now plays a central role in coordinating crisis responses around the globe. At least this is what some articles say, such as Technology Review's profile of David Kobia, Director of Technology Development for Ushahidi. For most people, both inside and outside the sector, who lack the expertise to dig any deeper, column inches translate into credibility. If everybody's talking about Ushahidi, it must be doing a great job – right?

Maybe.

Ushahidi is the result of three important trends:

- Increased availability and utility of spatial data;

- Rapid growth of communication infrastructure, particularly mobile telephony; and

- Convergence of networks based on that infrastructure on Internet access.

Given those trends, projects like Ushahidi may be inevitable rather than unexpected, but inevitability doesn't give us any indication of how effective these projects are. Big claims are made about the way in which crowdsourcing is changing the way in which business is done in other sectors, and now attention has turned to the humanitarian sector. John Della Volpe's short article in the Huffington Post is an example of such claims:

"If a handful of social entrepreneurs from Kenya could create an open-source "social mapping" platform that successfully tracks and sheds light on violence in Kenya, earthquake response in Chile and Haiti, and the oil spill in the Gulf -- what else can we use it for?"

The key word in that sentence is “successfully”. There isn’t any evidence that Ushahidi “successfully” carried out these functions in these situations; only that an instance of the Ushahidi platform was set up. This is an extremely low bar to clear to achieve “success”, like claiming that a new business was successful because it had set up a website. There has lately been an unfounded belief that the transformative effects of the latest technology are positively inevitable and inevitably positive, simply by virtue of this technology’s existence.

2. What does Successful Crowdsourcing Look Like?

To be fair, it's hard to know what would constitute “success” for crowdsourcing in emergencies. In the case of Ushahidi, we could look at how many reports are posted on any given instance – but that record is disappointing, and the number of submissions for each Ushahidi instance is exceedingly small in comparison to the size of the affected population – including Haiti, where Ushahidi received the most public praise for its contribution.

In any case, the number of reports posted is not in itself a useful measure of impact, since those reports might consist of recycled UN situation reports and links to the Washington Post's “Your Earthquake Photos” feature. What we need to know is whether the service had a significant positive impact in helping communities affected by disaster. This is difficult to measure, even for experienced aid agencies whose work provides direct help. Perhaps the best we can do is ask a simple question: if the system worked exactly as promised, what added value would it deliver?

As Patrick Meier, a doctoral student and Director of Crisis Mapping and Strategic Partnerships for Ushahidi has explained, crowdsourcing would never be the only tool in the humanitarian information toolbox. That, of course, is correct and there is no doubt that crowdsourcing is useful for some activities – but is humanitarian response one of those activities?

A key question to ask is whether technology can improve information flow in humanitarian response. The answer is that it absolutely can, and that's exactly what many people, including this author, have been working on for the last 10 years. However, it is a fallacy to think that if the quantity of information increases, the quality of information increases as well. This is pretty obviously false, and, in fact, the reverse might be true.

From an aid worker’s perspective, our bandwidth is extremely limited, both literally and metaphorically. Those working in emergency response – official or unofficial, paid or unpaid, community-based or institution-based, governmental or non-governmental – don't need more information, they need better information. Specifically, they need clearly defined information which can help them to make critical decisions in mounting their programmes in order to save lives and restore livelihoods.

I wasn't involved with the Haiti response, which made me think that perhaps my doubts about Ushahidi were unfounded and that perhaps the data they had gathered could be useful. In the course of discussions on Patrick Meier's blog, I suggested that the best way for Ushahidi to show my position was wrong would be to present a use case to show how crowdsourced data could be used (as an example) by the Information Manager for the Water, Sanitation and Hygiene Coordination Cluster, a position which I filled in Bangladesh and Georgia. Two months later, I decided to try that experiment for myself.

3. In Which I Look At The Data Most Carefully

The only crowdsourced data I have is the Ushahidi dataset for Haiti, but since Haiti is claimed as a success, that seemed like to be a good place to start. I started by downloading and reading through the dataset – the complete log of all reports posted in Ushahidi. It was a mix of two datastreams:

- Material published on the web or received via email, such as UN sitreps, media reports, and blog updates, and

- Messages sent in by the public via the 4636 SMS shortcode established during the emergency.

I was struck by two observations:

- One of the claims made by the Ushahidi team is that its work should be considered an additional datastream for a id workers. However, the first datastream is simply duplicating information that aid workers are already likely to receive.

- The 4636 messages were a novel datastream, but also the outcome of specific conditions which may not hold in places other than Haiti. The fact that there is a shortcode does not guarantee results, as can be seen in the virtually empty Pakistan Ushahidi deployment.

I considered that perhaps the 4636 messages could demonstrate some added value. They fell into three broad categories: the first was information about the developing situation, the second was people looking for information about family or friends missing after the earthquake, and the third and by far the largest, was general requests for help.

I tried to imagine that I had been handed this dataset on my deployment to Haiti. The first thing I would have to do is to read through it, clean it up, and transcribe it into a useful format rather than just a blank list. This itself would be a massive undertaking that can only be done by somebody on the ground who knows what a useful format would be. Unfortunately, speaking from personal experience, people on the ground simply don't have time for that, particularly if they are wrestling with other data such as NGO assessments or satellite images.

For the sake of argument, let's say that I somehow have the time to clean up the data. I now have a dataset of messages regarding the first three weeks of the response. 95% of those messages are for shelter, water and food. I could have told you that those would be the main needs even before I arrived in position, so that doesn't add any substantive value. On top of that, the data is up to 3 weeks old: I'd have to check each individual report just to find out just whether those people are still in the place that they were when they originally texted, and whether their needs have been met.

Again for the sake of argument, let's say that I have a sufficient number of staff (as opposed to zero, which is the number of staff you usually have when you're an information manager in the field) and they've checked every one of those requests. Now what? There are around 3000 individual “incidents” in the database, but most of those contain little to no detail about the people sending them. How many are included in the request, how many women, children and old people are there, what are their specific medical needs, exactly where they are located now – this is the vital information that aid agencies need to do their work, and it simply isn't there.

Once again for the sake of argument, let's say that all of those reports did contain that information – could I do something with it? If approximately 1.5 million people were affected by the disaster, those 3000 reports represent such a tiny fraction of the need that they can't realistically be used as a basis for programming response activities. One of the reasons we need aid agencies is economies of scale: procuring food for large populations is better done by taking the population as a whole. Individual cases, while important for the media, are almost useless as the basis for making response decisions after a large-scale disaster.

There is also this very basic technical question: once we have this crowdsourced data, what do we do with? In the case of Ushahidi, it was put on a Google Maps mash-up – but this is largely pointless for two reasons. First, there's a simple question of connectivity. Most aid workers and nearly all the population won't have reliable access to the Internet, and where they do, won't have time to browse through Google Maps. (It's worth noting that this problem is becoming less important as Internet connectivity, including the mobile web, improves globally – but also that the places and people prone to disasters tend to be the last to benefit from that connectivity.)

Second, from a functional perspective, the interface is rudimentary at best. The visual appeal of Ushahidi is similar to that of Powerpoint, casting an illusion of simplicity over what is, in fact, a complex situation. If I have 3000 text messages saying "I need food and water and shelter”, what added value is there from having those messages represented as a large circle on a map? The humanitarian community often lacks the capacity to analyse spatial data, but this map has almost no analytical capacity. The clustering of reports (where larger bubbles correspond to the places that most text messages refer to) may be a proxy for locations with the worst impact; but a pretty weak proxy derived from a self-selecting sample.

In the end, I was reduced to bouncing around the Ushahidi map, zooming in and out on individual reports – not something I would have time to do if I was actually in the field. Harsh as it sounds, my conclusion was that the data that crowdsourcing of this type is capable of collecting in a large-scale disaster response is operationally useless. The reason for this has nothing to do with Ushahidi, or the way that the system was implemented, but with the very nature of crowdsourcing itself.

4. Crowdsourcing Response or Digital Voluntourism?

One of the key definitions of “crowdsourcing” was provided by Jeff Howe in a Wired article that originally popularised the term: taking “a job traditionally performed by a designated agent (usually an employee) and outsourcing it to an undefined, generally large group of people in the form of an open call.” In the case of Haiti, part of the reason why people mistakenly thought crowdsourcing was successful, was because there were two different “crowds” being talked about.

The first was the global group of volunteers who came together to process the data that Ushahidi presented on its map. By all accounts, this was definitely a successful example of crowdsourcing as per Howe's definition. We can all agree that this group put a lot of effort into their work. However, the end result wasn’t especially useful. Furthermore, most of those volunteers won't show up for the next response – and in fact they didn't for Pakistan.

The media coverage of Ushahidi focuses mainly on this first crowd – the group of volunteers working remotely. Yet, the second crowd is much more important: the affected community. Reading through the Ushahidi data was heartbreaking, indeed. But we already knew that people needed food, water, shelter, medical aid – plus a lot more things that they wouldn't have been thinking of immediately as they stood in the ruins of their homes. In the Ushahidi model, this is the crowd that provides the actual data, the added value, but the question is whether crowdsourced data from affected communities could be useful from an operational perspective of organising the response to a large-scale disaster.

The data that this crowd can provide is unreliable for operational purposes for three reasons. First, you can't know how many people will contribute their information, a self-selection bias that will skew an operational response. Second, the information that they do provide must be checked – not because affected populations may be lying, but because people in the immediate aftermath of a large-scale disaster do not necessarily know all that they specifically need or may not provide complete information. Third, the data is by nature extremely transitory, out-of-date as soon as it's posted on the map.

Taken together, these three mean that aid agencies are going to have to carry out exactly the same needs assessments that they would have anyway – in which case, what use was that information in the first place?

5. Is Crowdsourcing Raising Expectations That Cannot be Met?

Many of the critiques that the crowdsourcing crowd defend against are questions about how to verify the accuracy of crowdsourced information, but I don't think that's the real problem. It's the nature of an emergency that all information is provisional. The real question is whether it's useful.

So to some extent those questions are a distraction from the real problems: how to engage with affected communities to help them respond to emergencies more effectively, and how to coordinate aid agencies to ensure and effective response. On the face of it, crowdsourcing looks like it can help to address those problems. In fact, the opposite may be true.

Disaster response on the scale of the Haiti earthquake or the Pakistan floods is not simply a question of aggregating individual experiences. Anecdotes about children being pulled from rubble by Search and Rescue teams are heart-warming and may help raise money for aid agencies but such stories are relatively incidental when the humanitarian need is clean water for 1 million people living in that rubble. Crowdsourced information – that is, information voluntarily submitted in an open call to the public – will not ever provide the sort of detail that aid agencies need to procure and supply essential services to entire populations.

That doesn't mean that crowdsourcing is useless: based on the evidence from Haiti, Ushahidi did contribute to Search and Rescue (SAR). The reason for that is because SAR requires the receipt of a specific request for a specific service at a specific location to be delivered by a specific provider – the opposite of crowdsourcing. SAR is far from being a core component of most humanitarian responses, and benefits from a chain of command that makes responding much simpler. Since that same chain of command does not exist in the wider humanitarian community, ensuring any response to an individual 4636 message is almost impossible.

This in turn raises questions of accountability – is it wholly responsible to set up a shortcode system if there is no response capability behind it, or are we just raising the expectations of desperate people?

6. Could Crowdsourcing Add Value to Humanitarian Efforts?

Perhaps it could. However, the problem is that nobody who is promoting crowdsourcing currently has presented convincing arguments for that added value. To the extent that it's a crowdsourcing tool, Ushahidi is not useful; to the extent that it's useful, Ushahidi is not a crowdsourcing tool.

To their credit, this hasn't gone unnoticed by at least some of the Ushahidi team, and there seems to be something of a retreat from crowdsourcing, described in this post by one of the developers, Chris Blow:

One way to solve this: forget about crowdsourcing. Unless you want to do a huge outreach campaign, design your system to be used by just a few people. Start with the assumption that you are not going to get a single report from anyone who is not on your payroll. You can do a lot with just a few dedicated reporters who are pushing reports into the system, curating and aggregating sources."

At least one of the Ushahidi team members now talks about “bounded crowdsourcing” which is a nonsensical concept. By definition, if you select the group doing the reporting, they're not a crowd in the sense that Howe explained in his article. This may be an area where Ushahidi would be useful, since a selected (and presumably trained) group of reporters could deliver the sort of structured data with more consistent coverage that is actually useful – the opposite of what we saw in Haiti. Such an approach, however, is not crowdsourcing.

Crowdsourcing can be useful on the supply side: for example, one of the things that the humanitarian community does need is increased capacity to process data. One of the success stories in Haiti was the work of the OpenStreetMap (OSM) project, where spatial data derived from existing maps and satellite images was processed remotely to build up a far better digital map of Haiti than existed previously. However, this processing was carried out by the already existing OSM community rather than by the large and undefined crowd that Jeff Howe described.

Nevertheless this is something that the humanitarian community should explore, especially for data that has a long-term benefit for affected countries (such as core spatial data). To have available a recognised group of data processors who can do the legwork that is essential but time-consuming would be a real asset to the community – but there we've moved away from the crowd again.

7. A Small Conclusion

My critique of crowdsourcing – shared by other people working at the interface of humanitarian response and technology – is not that it is disruptive to business as usual. My critique is that it doesn't work – not just that it doesn't work given the constraints of the operational environment (which Ushahidi's limited impact in past deployments shows to be largely true), but that even if the concept worked perfectly, it still wouldn't offer sufficient value to warrant investing in.

Unfortunately, because Ushahidi rests its case almost entirely on the crowdsourcing concept, this article may be interpreted as an attack on Ushahidi and the people working on it. However, all of the questions I've raised here are not directed solely at Ushahidi (although I hope that there will be more debate about some of the points raised) but hopefully will become part of a wider and more informed debate about social media in general within the humanitarian community.

Resources are always scarce in the humanitarian sector, and the question of which technology to invest in is a critical one. We need more informed voices discussing these issues, based on concrete use cases because that's the only way we can test the claims that are made about technology. For while the tools that we now have at our disposal are important, we have a responsibility to use them for the right tasks.

Image credit: Urban Search and Rescue Team, with assistance from U.S. military personnel, coordinate plans before a search and rescue mission in order to find survivors in Port-au-Prince. U.S. Navy Photo.

| “If all You Have is a Hammer” - How Useful is Humanitarian Crowdsourcing? data sheet 21334 Views | |

|---|---|

| Countries: | Haiti |

It's too soon to tell

You have written a very thought-provoking piece. I hope it is read by many. However, I have to echo what Rob said:

"It seems to me that Ushahidi is a work in progress. You can't expect that a product developed in the way that it was, and put to such intensive early use in so many scenarios, would get everything right from the start."

I understand that you don't "see what use it would be to – specifically – somebody actually coordinating the delivery of humanitarian aid", but perhaps, just maybe, it will turn out to be something far more important or useful than any of us can currently imagine?

I don't think it is time to wholly dismiss the concept just yet.

I promise that I won't actually beat anybody who critiques me

Mark:

First, even mock threatening someone who critiques your argument (your reply to anonymous) is unprofessional. That type of reply doesn't encourage positive dialogue.

I realise that British humour doesn't translate well to the US, and that verbal humour in general doesn't translate well to the web. “Don't make me beat you” was what my old boss used to say whenever I asked him a difficult question. He never actually beat me.

I thought the “tough love” comment was amusing, my reply was simply responding in that vein and should in no way be taken to mean that I was threatening anybody. Thanks for the comments – I'll post a full reply tomorrow.

A fair critique, but...

Paul - this is a fair critique of how far "crowdsourcing" has gotten to today, but I agree with some of the other commenters that it is too early to pass such harsh judgment and to conclude that it will remain valueless (if indeed one accepts that it has been totally valueless to date, which I think is still arguable). I want to make five or so points.

First, even mock threatening someone who critiques your argument (your reply to anonymous) is unprofessional. That type of reply doesn't encourage positive dialogue.

Second, I think you are hung up on semantics; just because someone is calling something "crowdsourcing" that doesn't fit the original definition according to Jeff Howe make the discussion of these issues irrelevant. As you recognize, the community refers to a lot of things as "crowdsourcing" that is really something a bit different in your opinion; but don't dismiss it as a valueless part of the dialogue; definitions change over time. If the point you are trying to make is that crowdsourcing according to its original definition has no value for humanitarian purposes, even if the efforts of those who say they are crowdsourcing (but are not in your opinion) does have some value, and can't continue to evolve and innovate, then this discussion has digressed to a point where it can't be saved.

Third, you are absolutely 100% correct about the limited value to 99% of the information that came in through the 4636 system; but lives were saved through the Search and Rescue efforts - that makes it all worthwhile IMO even if that part was technically not "crowdsourcing". I think it is a fair critique to question the value of putting up a website with dots on a map if people are not helped directly by doing so. And I know that Ushahidi and others recognize that and are working to address those issues. But by totally ignoring efforts like Swift River or InSTEDD's RIFF project that are designed to make sense of streams of information like this, you are drawing conclusions based on an inaccurate picture of the current landscape of open source humanitarian systems and their future.

Fourth, I too passionately believe that "technology can improve information flow in humanitarian response" but I also believe that we can make sense of more information effectively and efficiently. There are a growing number of tools available (some of which are mentioned above) to organize and manage the additional data streams available through new technologies that are coming from many new sources (including the disaster victims themselves). Even OCHA recognizes that information management "in the context of humanitarianism should and must include those people [the general public] who are on the receiving end of humanitarian action."(1) How we do that effectively remains a challenge, but it is premature to declare defeat. The movement of volunteerism amongst the global technical community has proven itself effective through the Crisis Mappers Network, Crisis Commons, Random Hacks of Kindness and other voluntary communities.

Fifth, did you evaluate how effective ideas that come out of efforts such as Project Epic's Tweak the Tweet could become for general crowdsourcing? Tweak the Tweet was a progressive effort to positively encourage users of twitter to structure their requests for information or requests for assistance about Haiti to provide geographically referenced, structured and actionable information. I think the results were mixed, and not as effective as 4636. but building on the concept, what would be the impact on the usefulness of the information coming into a resource like Ushahidi if submissions/reports could be encouraged to be put into a format usable by and useful to humanitarian agencies?

I think your conclusion might be different if Ushahidi users filled out a more structured form from the web or their mobile device, which contained fields of information that were needed by humanitarian agencies to do their assessments.

It seems like a little early to be so definitive about the value of crowdsourcing. Let's see where we are in even 12 months.

Best regards,

Mark

continue to evolve to provide useful and actionable information.

Fourth,

Ethical considerations?

Ben:

I wonder if anyone organising - for example - the supply of clean water to Port-au-Prince soon after the earthquake needed very much detail from the general public at all. Some idea of where to find population concentrations, key facilities and the operational context (local partners, security, roads etc), might be enough in the early stages.

True enough in the early stages of the emergency. It quickly becomes necessary to identify local patterns of water management, but once again I find it hard to see how crowdsourcing would be useful there. We do need better survey data fairly quickly, but the question is: is crowdsourcing the most effective and efficient way to get the data you need?

If a 4636-type system is established to gather urgent individual needs, what ethical and practical obligations ensue from gathering that information?

This was something that I didn't include in the article, but something that has been worrying me greatly ever since I started thinking about this. When I said that reading through the Haiti data was heartbreaking, I meant it. Many of the 4636 messages are from people trying to cope with truly appalling situations, and I don't want to think about the people who texted 4636 in desperation – and never received a response. Who takes responsibility for that?

Are you saying I've got a small dataset?

That said, your critique rests on a very small data set. It seems to me that Ushahidi is a work in progress. You can't expect that a product developed in the way that it was, and put to such intensive early use in so many scenarios, would get everything right from the start.

Well, quite. On the other hand, let's not forget that we're talking about people's lives here, so failure has quite severe implications. You don't need a large dataset to prove that a concept has no legs, However I would welcome anybody from Ushahidi demonstrating how I've got this wrong. Also: Ushahidi is only an example. My concern is with the wider application of social media, and particularly crowdsourcing.

If the product and the company have not immediately met all expectations, I don't think that serves as a blanket indictment of the whole concept.

I agree, which is why I've tried here to imagine what it would be like if they did meet a minimal level of expectation. The answer is that I still don't see what use it would be to – specifically – somebody actually coordinating the delivery of humanitarian aid.

Ushahidi has opened several important dialogues - one about technology for humanitarian purposes, one about the utility of crowdsourcing vs. command-and-control, centralized information systems, and one about the potential of African IT entrepreneurship.

I'm not sure I agree with you. The dialogue about the potential of African IT entrepreneurship – possibly, but what is that dialogue, exactly? The dialogue about the utility of crowdsourcing is not something that Ushahidi seems to be interested in – there are too many assumptions loaded up at the start to call it dialogue. The dialogue about humanitarian IT has been going on for a long time, and one of my frustrations is that nobody seems to have paid any attention to that existing dialogue.

The potential of babies

Anonymous:

In my view, it is better to nurture the baby's potential, even though they already haven't completely demonstrated their value added sufficient to our liking, in hopes for all the things that they might become in the future.

I'm not sure that I like the baby metaphor, but let's look at it like this: if your baby is better off growing up to be a concert pianist, it's probably not a good idea to force them to train as a cage fighter.

Why kill the baby now?

Because resources are scarce, and might be more usefully allocated to other endeavours.

Is this just a bit of tough love from well-meaning uncle Paul?

Don't make me beat you.

If these infant

If these infant projects you speak of here can be likened to babies, it is to be expected that they will stumble around and lose their way a bit before they grow up. In my view, it is better to nurture the baby's potential, even though they already haven't completely demonstrated their value added sufficient to our liking, in hopes for all the things that they might become in the future. It is, after all, a future that we now cannot even imagine. A future in which I imagine that our babies will actually help take care of us in our old age.

I could be wrong. It is possible the baby really does not grow, mature, and exhibits no signs of progress. But if this is true, the baby will die of its own accord.

So, what could possibly be the value added in advocating killing the baby now, simply because it has not already demonstrated its full adult potential and future value added, sufficient to our liking? Why kill the baby now? Why not use the same exact insights you describe here (which, I might add, are truly insightful/beneficial and that the baby needs to learn in order to survive), as gems of insight to impart to the child to help it along the path or direction it needs to go? Or perhaps that has always been your intent, to offer tough love meant to help the baby grow, and I have misread you here. Is this just a bit of tough love from well-meaning uncle Paul?

Good questions - early days

As someone who has written a fair amount about Ushahidi both in my book and elsewhere, and is generally impressed with their approach, I'm pleased to see these questions being asked. Their model is so elegant and their story is so compelling that it has come to be seen as almost an afterthought to ask "does it work?" And, of course, that's the whole point.

That said, your critique rests on a very small data set. It seems to me that Ushahidi is a work in progress. You can't expect that a product developed in the way that it was, and put to such intensive early use in so many scenarios, would get everything right from the start.

If the product and the company have not immediately met all expectations, I don't think that serves as a blanket indictment of the whole concept. Ushahidi has opened several important dialogues - one about technology for humanitarian purposes, one about the utility of crowdsourcing vs. command-and-control, centralized information systems, and one about the potential of African IT entrepreneurship. I'd be shocked if they ended up being the last word on any of those subjects.

Couple of points

An impressive and interesting critique. I wanted to add a couple of points/questions.

1 You say: "Crowdsourced information – that is, information voluntarily submitted in an open call to the public – will not ever provide the sort of detail that aid agencies need to procure and supply essential services to entire populations."

I wonder if anyone organising - for example - the supply of clean water to Port-au-Prince soon after the earthquake needed very much detail from the general public at all.

Some idea of where to find population concentrations, key facilities and the operational context (local partners, security, roads etc), might be enough in the early stages.

2. If a 4636-type system is established to gather urgent individual needs, what ethical and practical obligations ensue from gathering that information? What if response is unlikely? What should be the governance that prevents it being a well-intentioned but cruel hoax? Or will the wisdom of the crowd weed out the underperformers naturally?

Post new comment